(aka Why I’m a Voltage-Optimisation Sceptic)

Of late I’ve seen reports about how high grid voltage is supposedly a problem and why voltage optimisation may be the solution. But is high grid voltage the villain it’s made out to be?

By Richard Keech

2018-11-08

Voltage scaremongering

I was prompted to write this in response to this article “Power bills up? Appliances burning out? You may have a voltage problem” by Liz Hobday (ABC, 2018-11-08), though there are others too. This is the first that I’ve seen that suggests that our homes need a gadget to fix the problem by lowering the voltage in our homes.

Grid voltage standard

In Australia the current target grid supply voltage was defined by Australian Standard 60038 in 2000 and updated in 2012. It says that at the meter box the grid voltage of our AC supply should be nominally 230 volts, with an acceptable tolerance of minus 6% (216V) to plus 10% (253V). That’s a 37V acceptable range of voltage at the point of supply.

This new voltage standard has been adopted state by state over time (eg Queensland from October 2018). The previous standard was 240V +/- 6% (a 29V acceptable range). Western Australia remains the only Australian jurisdiction still using 240V.

Why the change? This change to 230V is part of a harmonisation of global grid voltages to allow for common operating conditions for equipment sold around the world. Previously some countries were based on 220V, and others on 240V. The 230V is a compromise middle ground. See here for more information. The international standard is IEC 60038.

What’s the best voltage?

The voltage ranges mentioned here apply at the point of supply, ie the meter box. The voltage is allowed to vary from that within the premises because of the resistance of the wiring. The Australian Wiring Rules (AS 3000) allow an additional 5% variation (drop) on the supply voltage, ie -11% to +10% measured at the power point (range 205V – 253V, a 48V range).

Equipment operating on grid mains supply needs to be designed to work normally and safely at any voltage in the permitted range. So there’s no ‘best’ voltage. If a piece of equipment doesn’t work properly at, say 253V then it is at fault.

Increased consumption with high voltage?

Some people are concerned that high grid supply voltage leads to increased consumption, and therefore wasted energy. That’s certainly the suggestion in the Hobday article. Does this happen? It’s complicated. To answer that we have to look at types of electrical load (a ‘load’ means anything that consumes electricity).

Resistive loads

Things like halogen light bulbs and kettles present a simple electrical resistance to the flow of current such that the current increases in direct proportion with the voltage. So the power consumed increases with the square of the voltage (P=V2/R). So, all other things being equal, increased voltage does means increased energy consumed.

However all other things are typically not equal. In the case of a halogen light bulb, more energy equals brighter light. So fewer lights are needed for the same illumination. With a kettle, increased power causes the water to heat more quickly, so the period of operation will be less. The energy needed to heat the water is largely unchanged.

Thermostatically controlled loads

Devices like fridges, column heaters and irons are regulated with a simple thermostat. So when they’re cooling or heating, higher voltage equals higher power. But higher operating power equals more work done and they’ll then turn off sooner. So higher supply voltage will typically not lead to more energy consumed for the same job performed.

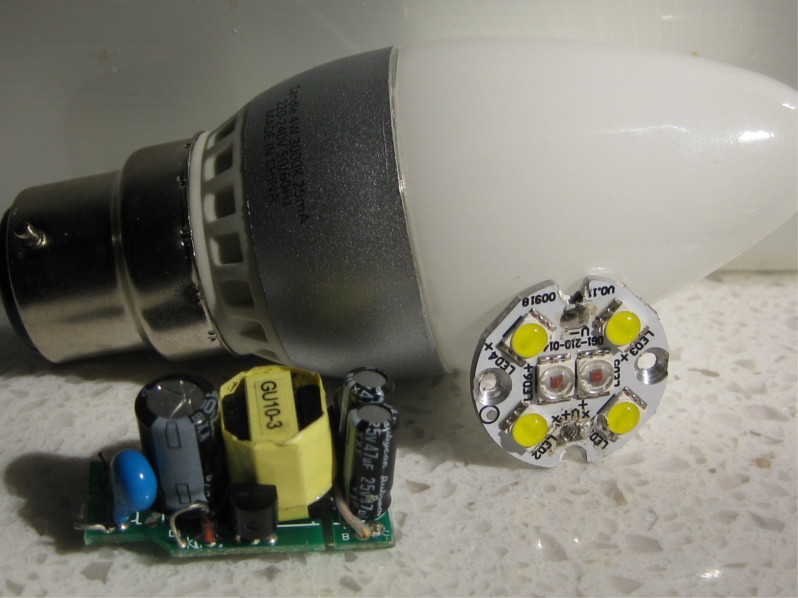

Electronic loads

Devices like consumer electronics (TVs, computers, phone chargers, LED lights) typically have circuits called switched-mode power supplies which draw only the power that they need, regardless of the supply voltage. The only practical implication of higher voltage (within the specified range) would typically be very slightly higher operating temperatures. There’s an interesting article here about the power regulation in LED lights.

Overall effect. In practice the increase in over consumption with voltage only applies to unregulated resistive loads. The only type I can think of that is truly unregulated is the old-fashioned incandescent or flouresent light. To the extent that they do consume more, they are also giving more light, so arguably the energy is not wasted. In any case, these types of light are disappearing quickly as LED lighting dominates. Other resistive loads like kettles, irons, and column heaters are regulated so that they’ll operate for less time if the voltage is higher.

Wiring losses

All electrical wiring has a small resistance to electrical current. So we’re always losing some energy in the wires themselves. For every kilowatt drawn by an end-use device, there is a non-trivial number of watts of power drawn by the wiring that feeds that device, all the way back to the generator. That energy lost in the wires increases with the square of the current. So consider a given regulated load (say a computer at 50W) in two different scenarios – high-voltage and low-voltage. In both cases the same power is drawn by the device, but in the high voltage case there is less current, so there is less power taken by the wiring. A 10% reduction in current means a 19% reduction in energy lost in the wires.

The end result is that higher voltages mean less energy lost in the wiring for a constant load. So high voltage can actually reduce energy lost in the grid wiring. That’s why our transmission networks operate at very high voltages.

Voltage optimisation

There’s a market for equipment that uses sophisticated power electronics to adjust the grid supply voltage where it enters the premises so that voltage is stabilised at or close to the grid nominal voltage as it’s passed into and throughout the premises. These devices are called voltage optimisers. This equipment can also improve the quality of the supply by eliminating spikes and harmonics (types of voltage disturbances) which might effect some equipment and correct the power factor.

I’ve seen this type of equipment adopted in commercial and industrial contexts. And the Hobday article indicates that this is now also available for residential use. Claims are often made about significant reduction in consumption arising from fitting this equipment. I’m yet to be convinced at the cost effectiveness of voltage optimisation because:

- the types of loads that benefit from voltage optimisation are typically dumb and inefficient, and the money might be better spent updating the loads themselves. For example, replacing halogen lamps with LEDs;

- voltage optimisation equipment is itself consuming energy, so any reduction in consumption by dumb loads will be at least partially offset by the consumption of the optimisation gear itself;

- if you want to eliminate spikes and harmonics then that can be achieved with power conditioning equipment which is simpler and cheaper than voltage optimisation; and

- voltage optimisation equipment is typically expensive.

The only scenarios I can see where voltage optimisation would make sense are:

- where the local supply voltage cannot be relied upon to be within tolerances;

- you want it for power-factor correction; or

- where there is specific vulnerable equipment that, for whatever reason, can’t tolerate voltage variation within the normal range.

Solar panels and voltage optimisation

One reason for higher voltages in the local grid is the abundance of solar panels. Fitting a voltage optimiser between an inverter and the grid might, at first glance, seem to allow the inverter to keep on generating when the supply voltage in the street would otherwise cause the inverter to shut off. However the way that inverters shut off at high grid voltage is a protection measure to keep the voltage in the local grid in a safe range.

So when there’s lot’s of local solar generation, I can see no way that a voltage optimiser in a home can change the fact that inverter exports need to be regulated by grid supply voltage.

Other reading

Skelton, Paul., ‘When Voltage Varies’, Electrical Connection, 2012, https://electricalconnection.com.au/when-voltage-varies/

Woodford, Chris., ‘Voltage Optimisation’, Explain That Stuff, 2018, https://www.explainthatstuff.com/voltage-optimisation.html

Carbon Trust, 2011, ‘Voltage Management: An introduction to technology and techniques’, https://www.carbontrust.com/resources/faqs/technology-and-energy-saving/voltage-management/.